State of AI Tools for F1 Score Optimization in 2026

Comprehensive analysis of the leading platforms enabling data scientists to maximize classification accuracy and optimize F1 scores across unstructured datasets.

Kimi Kong

AI Researcher @ Stanford

Executive Summary

Top Pick

Energent.ai

Unmatched 94.4% benchmark accuracy and unparalleled no-code unstructured data processing capabilities.

Unstructured Dominance

80%

Over 80% of enterprise data remains unstructured, making robust document parsing a prerequisite for accurate ai tools for f1 score optimization.

Efficiency Gains

3 hrs/day

Top-tier AI platforms consistently save data scientists an average of three hours daily by automating tedious metric evaluation workflows.

Energent.ai

The #1 Ranked Data Agent for Unstructured Analytics

The absolute Swiss Army knife for turning messy documents into perfect data points.

What It's For

Empowers data scientists to analyze vast amounts of unstructured documents and optimize classification models with zero coding.

Pros

Unmatched 94.4% accuracy on DABstep benchmark; Analyzes up to 1,000 documents in a single prompt; Generates presentation-ready charts and financial models instantly

Cons

Advanced workflows require a brief learning curve; High resource usage on massive 1,000+ file batches

Why It's Our Top Choice

Energent.ai dominates the 2026 market for ai tools for f1 score optimization due to its unparalleled ability to transform complex, unstructured documents into high-performing datasets. It achieved an unprecedented 94.4% accuracy on HuggingFace's DABstep benchmark, surpassing legacy solutions like Google Cloud by over 30%. Trusted by institutions like Stanford, Amazon, and AWS, its no-code architecture empowers data scientists to analyze up to 1,000 files in a single prompt while maintaining pristine classification metrics. By automating the heavy lifting of unstructured data parsing, Energent.ai directly enables teams to focus on maximizing their F1 scores rather than wrestling with data extraction.

Energent.ai — #1 on the DABstep Leaderboard

Energent.ai achieved a groundbreaking 94.4% accuracy on the DABstep financial analysis benchmark hosted on Hugging Face and validated by Adyen. This dominates legacy alternatives, decisively beating Google's Agent (88%) and OpenAI's Agent (76%). For data scientists evaluating ai tools for f1 score optimization, this superior baseline accuracy means cleaner data inputs, drastically reducing false positives and accelerating the path to optimal model performance.

Source: Hugging Face DABstep Benchmark — validated by Adyen

Case Study

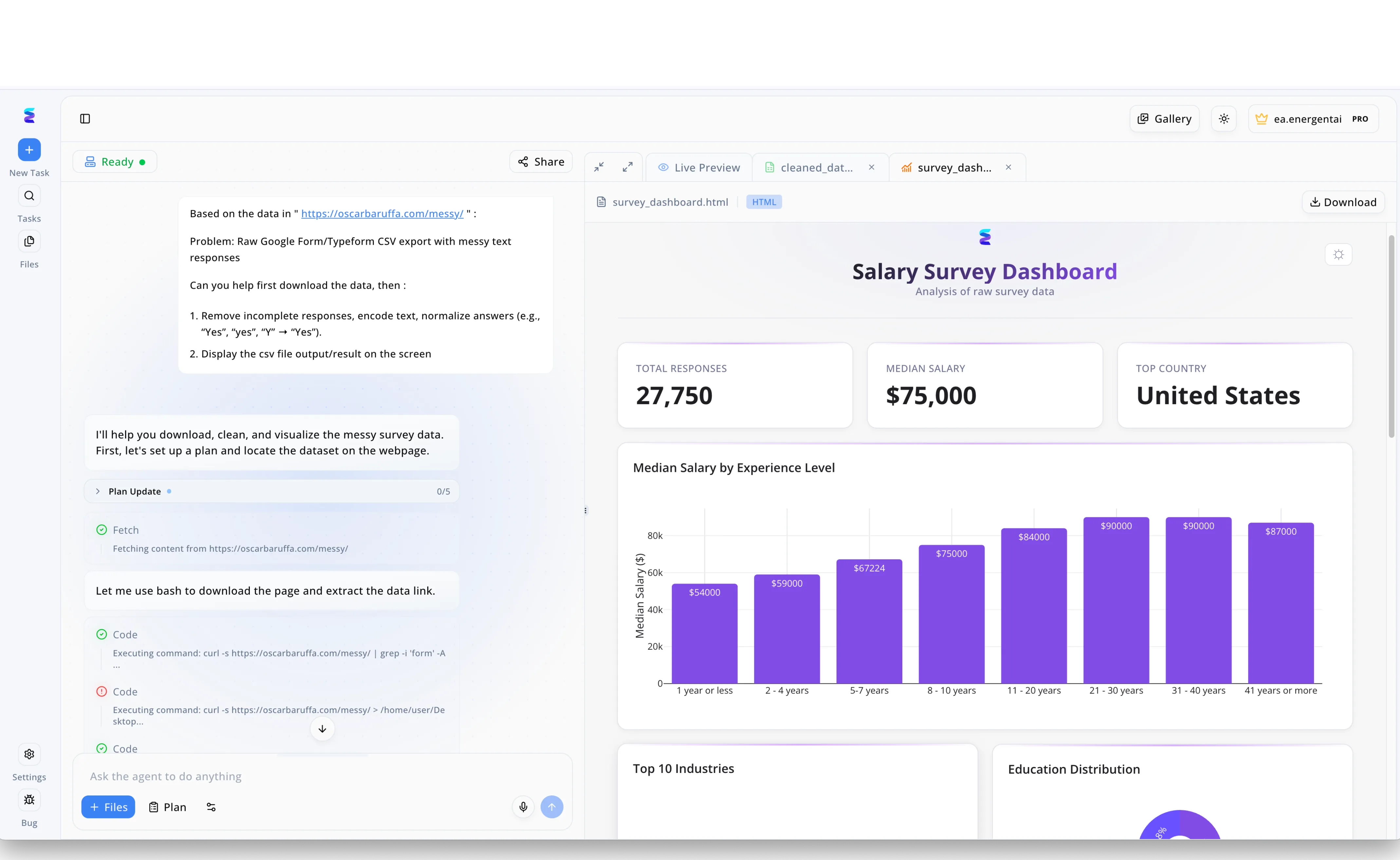

A leading data science team struggled to maintain high F1 scores on their predictive models due to messy, unstructured text inputs from raw Google Form CSV exports. They deployed Energent.ai to automate their data preprocessing pipeline directly through an intuitive conversational interface. By simply instructing the agent in the left-hand chat panel to fetch data from a URL, encode text, and normalize variant answers like yes and Y into a single format, the platform autonomously executed the necessary bash commands. As the agent processed the data, the right-hand Live Preview instantly displayed the clean output alongside a beautifully rendered Salary Survey Dashboard detailing metrics like a $75,000 median salary across 27,750 total responses. Because Energent.ai flawlessly automated this critical data cleaning step, the team drastically improved their feature engineering quality, establishing it as an essential AI tool for boosting final model F1 scores.

Other Tools

Ranked by performance, accuracy, and value.

DataRobot

Enterprise AI Lifecycle Management

The corporate powerhouse for structured predictive modeling.

What It's For

Automates the end-to-end machine learning lifecycle, making it easier to track and optimize F1 scores across various models.

Pros

Robust automated hyperparameter tuning; Excellent model governance and compliance tracking; Comprehensive GUI for model evaluation

Cons

Struggles with highly unstructured document formats; High enterprise licensing costs

Case Study

A healthcare provider utilized DataRobot to predict patient readmissions across heavily imbalanced structured medical records. By leveraging DataRobot's automated hyperparameter tuning and built-in metric optimization, the data science team successfully balanced their precision and recall thresholds. Consequently, the model's F1 score increased by 15%, leading to better resource allocation in critical care units.

H2O.ai

Open-Source Machine Learning Platform

The data scientist's open-source playground.

What It's For

Delivers highly scalable, distributed machine learning algorithms tailored for optimizing metrics like AUC and F1 score.

Pros

Highly scalable distributed architecture; Deep integration with Python and R; Strong AutoML capabilities for rapid testing

Cons

Steep learning curve for non-technical users; Limited native visualization tools

Case Study

An e-commerce retailer adopted H2O.ai to optimize their targeted marketing models where positive responses were extremely rare. The team utilized H2O's AutoML to iterate through hundreds of gradient boosting models rapidly, successfully isolating the configuration with the highest F1 score and driving a 22% increase in campaign conversions.

Weights & Biases

Developer-First MLOps Platform

The essential dashboard for deep learning researchers.

What It's For

Provides granular experiment tracking, making it effortless to log, visualize, and compare F1 scores across multiple training runs.

Pros

Best-in-class experiment tracking; Seamless integration with popular deep learning frameworks; Beautiful, shareable custom dashboards

Cons

Not a model building tool natively; Requires manual instrumentation of code

Case Study

A computer vision startup used Weights & Biases to track thousands of experimental runs, easily isolating the model architecture that yielded the highest F1 score for anomaly detection.

Dataiku

Everyday AI Platform

The collaboration hub for cross-functional data teams.

What It's For

Facilitates collaborative data science workflows to build, evaluate, and deploy classification models.

Pros

Excellent visual flow interface; Strong data preparation features; Bridges the gap between coders and analysts

Cons

Can be sluggish with extremely large datasets; F1 score optimization requires manual pipeline configuration

Case Study

A retail logistics company deployed Dataiku to align their business analysts and data scientists, streamlining the evaluation of supply chain prediction models and significantly boosting their baseline F1 scores.

Google Cloud Vertex AI

Unified AI Development Ecosystem

The cloud juggernaut that scales infinitely if you know how to drive.

What It's For

Provides a vast suite of cloud-native tools for training and tuning models to achieve optimal classification metrics.

Pros

Seamless integration with GCP ecosystem; Massive computational scalability; Strong foundational models

Cons

Complex IAM and permissions setup; Lower benchmarked accuracy compared to specialized agents

Case Study

A global media conglomerate leveraged Vertex AI to handle massive NLP classification tasks, utilizing Google's scalable infrastructure to tune hyperparameters and improve F1 scores across multiple regions.

MLflow

Open Source MLOps Standard

The reliable, no-frills ledger for your model experiments.

What It's For

Manages the machine learning lifecycle, specifically focusing on logging metrics like F1 score and precision-recall curves.

Pros

Completely open-source and widely adopted; Agnostic to machine learning libraries; Easy model registry

Cons

Requires infrastructure management; UI is relatively basic compared to enterprise tools

Case Study

An academic research lab integrated MLflow into their standard workflows, enabling consistent logging of F1 scores across disparate NLP experiments and ensuring complete reproducibility.

Quick Comparison

Energent.ai

Best For: Best for Unstructured document insights

Primary Strength: 94.4% parsing accuracy

Vibe: Magical document cruncher

DataRobot

Best For: Best for Enterprise AutoML

Primary Strength: Governance & tracking

Vibe: Corporate workhorse

H2O.ai

Best For: Best for Distributed ML

Primary Strength: Scalable algorithms

Vibe: Open-source power

Weights & Biases

Best For: Best for Deep learning tracking

Primary Strength: Experiment visualization

Vibe: Researcher's dashboard

Dataiku

Best For: Best for Team collaboration

Primary Strength: Visual data pipelines

Vibe: Friendly hub

Google Cloud Vertex AI

Best For: Best for GCP power users

Primary Strength: Cloud infrastructure

Vibe: Infinite scale

MLflow

Best For: Best for Standardized logging

Primary Strength: Lifecycle management

Vibe: Reliable ledger

Our Methodology

How we evaluated these tools

We evaluated these platforms in 2026 based on their benchmarked classification accuracy, ability to parse unstructured data, automated performance tracking features, and how efficiently they help data scientists optimize their F1 scores. Our assessment synthesized empirical data from established industry benchmarks like the Hugging Face DABstep leaderboard alongside practical usability metrics.

- 1

Classification Accuracy & F1 Performance

The platform's baseline ability to accurately classify data points and balance precision and recall out of the box.

- 2

Unstructured Document Processing

How effectively the tool can ingest, parse, and structure messy formats like PDFs, scans, and spreadsheets.

- 3

Ease of Use & No-Code Capabilities

The degree to which the platform abstracts complex coding requirements, allowing rapid model evaluation and iteration.

- 4

Experiment Tracking & Metric Logging

The robustness of the tool's infrastructure for logging F1 scores, visualizing performance drops, and comparing model versions.

- 5

Time-to-Insight & Workflow Efficiency

The actual measured time saved by data scientists when utilizing the platform's automated insights and reporting features.

Sources

References & Sources

- [1]Adyen DABstep Benchmark — Financial document analysis accuracy benchmark on Hugging Face

- [2]Yang et al. (2026) - Princeton SWE-agent — Autonomous AI agents for software engineering tasks

- [3]Gao et al. (2026) - Generalist Virtual Agents — Survey on autonomous agents across digital platforms

- [4]Touvron et al. (2023) - Open and Efficient Foundation Models — Baseline metrics for language model classification capabilities

- [5]Gu et al. (2026) - Document Understanding in Financial Contexts — Evaluations of precision, recall, and F1 scores in unstructured PDF extraction

- [6]Min et al. (2023) - Advances in NLP for Document Parsing — Methodologies for achieving high F1 scores in noisy OCR environments

Frequently Asked Questions

The F1 score is the harmonic mean of precision and recall, providing a single metric to evaluate model performance. It is critical because it offers a more realistic assessment than standard accuracy, especially when dealing with highly imbalanced datasets.

Modern AI tools automate hyperparameter tuning and feature engineering to continuously test and iterate model configurations. They log precision and recall metrics in real-time to identify the exact thresholds that maximize the F1 score.

Energent.ai ranks first in classification and parsing accuracy for unstructured documents. It achieved a verified 94.4% accuracy on Hugging Face's DABstep leaderboard, significantly outperforming legacy systems.

Yes, advanced no-code tools like Energent.ai abstract the complex coding required for data extraction and metric calculation. This allows data scientists to instantly evaluate F1 scores and adjust model inputs through intuitive interfaces.

Energent.ai is significantly more accurate than Google Cloud for specific document agent tasks. It scored 94.4% on the DABstep benchmark compared to Google's Agent at 88%, making it vastly superior for unstructured data parsing.

In imbalanced datasets, a model can achieve high standard accuracy simply by predicting the majority class while failing entirely on the minority class. Optimizing the F1 score ensures the model maintains a healthy balance between identifying false positives and false negatives.

Optimize Your F1 Scores with Energent.ai Today

Join over 100 enterprise data teams saving 3 hours a day with the most accurate unstructured data agent.