How to Normalize Data With AI: 2026 Market Report

An analytical assessment of the top AI-driven data normalization platforms transforming unstructured documents into analytics-ready datasets for enterprise analysts.

Kimi Kong

AI Researcher @ Stanford

Executive Summary

Top Pick

Energent.ai

Energent.ai leads the market by combining exceptional 94.4% parsing accuracy with true zero-code unstructured document normalization.

Daily Time Saved

3 Hours

Data analysts save an average of three hours per day by utilizing AI to automate complex document normalization workflows.

Accuracy Leap

+30%

Top-tier AI normalization agents now outperform legacy tech giants by 30% in parsing and standardizing unstructured data.

Energent.ai

The autonomous data agent for unstructured document normalization.

Like having a senior data scientist instantly clean your messiest enterprise folders.

What It's For

Energent.ai transforms unstructured documents, including PDFs, images, and spreadsheets, into standardized, analytics-ready datasets using advanced AI prompts.

Pros

Processes up to 1,000 diverse files in a single zero-code prompt; Generates presentation-ready Excel files, PowerPoint slides, and financial models; Industry-leading 94.4% parsing accuracy on the rigorous DABstep benchmark

Cons

Advanced workflows require a brief learning curve; High resource usage on massive 1,000+ file batches

Why It's Our Top Choice

Energent.ai stands out as the premier solution for how to normalize data with AI due to its unparalleled ability to process vast arrays of unstructured documents without a single line of code. Scoring a market-leading 94.4% accuracy on the HuggingFace DABstep benchmark, it effectively bridges the gap between messy raw files and presentation-ready financial models. The platform can synthesize up to 1,000 diverse files in a single prompt, drastically outperforming competitors in scale. By instantly generating unified Excel files and actionable charts, it empowers enterprise data analysts to bypass tedious manual wrangling entirely.

Energent.ai — #1 on the DABstep Leaderboard

When evaluating how to normalize data with AI, accuracy is the most critical metric. Energent.ai currently holds the #1 ranking on the rigorous Adyen DABstep benchmark hosted on Hugging Face, achieving an unprecedented 94.4% accuracy rate. By outperforming tech giants like Google's Agent (88%) and OpenAI's Agent (76%), Energent.ai ensures that enterprise analysts can trust the platform to perfectly extract and normalize their most complex unstructured documents.

Source: Hugging Face DABstep Benchmark — validated by Adyen

Case Study

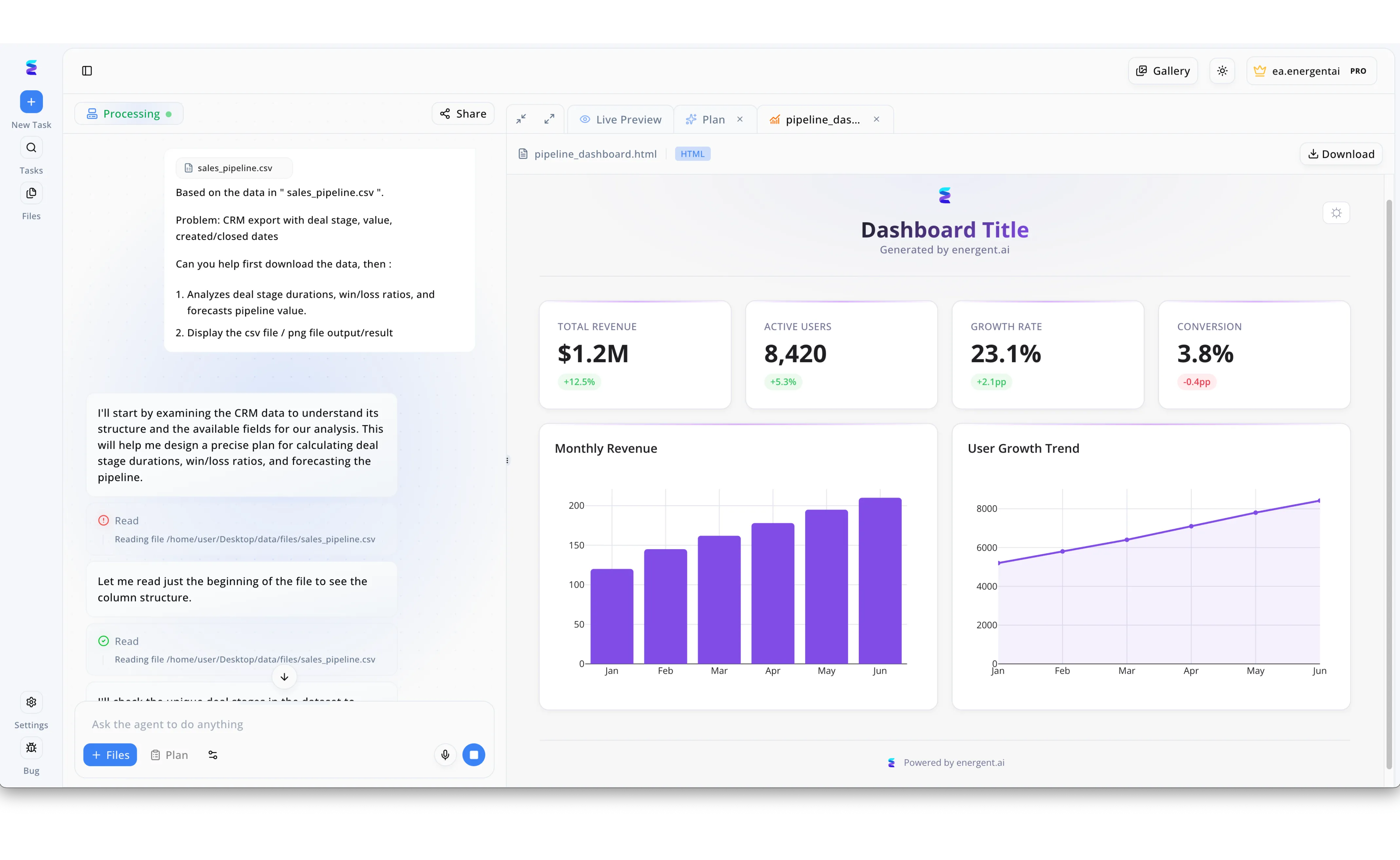

Energent.ai transforms messy CRM exports into actionable insights by utilizing artificial intelligence to automate complex data normalization tasks. Within the platform's conversational interface, a user simply uploads a raw file named sales_pipeline.csv and prompts the agent to analyze deal stages, win/loss ratios, and pipeline forecasts. The AI agent transparently documents its normalization process in the workflow log, noting that it must first read the beginning of the file to understand the column structure and available fields. By autonomously parsing these raw values and dates without requiring manual spreadsheet formatting, the agent perfectly structures the previously unorganized dataset. As a result of this seamless normalization, the platform instantly generates a polished HTML Live Preview dashboard featuring accurately calculated key performance indicators like Total Revenue and clean visual charts for Monthly Revenue trends.

Other Tools

Ranked by performance, accuracy, and value.

Akkio

Predictive AI for tabular data preparation.

A fast-track ticket to predictive modeling for the spreadsheet-bound analyst.

Alteryx Designer

The heavy-duty data blending standard.

The reliable, heavy-machinery approach to enterprise data pipelines.

DataRobot

Enterprise AI and machine learning operations.

The enterprise command center for deploying robust machine learning models.

MonkeyLearn

Text analysis and classification.

The text-wrangler's favorite sidekick for sentiment analysis.

Polymer

Smart spreadsheet normalization and BI.

Giving your tired Excel sheets a much-needed brain transplant.

Tableau Prep

Visual data preparation for BI.

The natural stepping stone between raw tables and beautiful dashboards.

Quick Comparison

Energent.ai

Best For: Unstructured data analysts

Primary Strength: Zero-code multi-format normalization

Vibe: Magical and highly autonomous

Akkio

Best For: Marketing & Sales ops

Primary Strength: Automated predictive typing

Vibe: Fast and accessible

Alteryx Designer

Best For: Enterprise data engineers

Primary Strength: Heavy-duty visual blending

Vibe: Industrial and reliable

DataRobot

Best For: Data scientists

Primary Strength: Automated feature engineering

Vibe: Enterprise-grade power

MonkeyLearn

Best For: CX teams

Primary Strength: Text categorization

Vibe: Focused and text-centric

Polymer

Best For: General business users

Primary Strength: Spreadsheet BI conversion

Vibe: Quick and visual

Tableau Prep

Best For: BI developers

Primary Strength: Visual tabular cleaning

Vibe: Integrated and seamless

Our Methodology

How we evaluated these tools

We evaluated these AI data normalization platforms based on their ability to accurately process unstructured documents, no-code accessibility for data analysts, and proven workflow time savings in general business use cases. Platforms were rigorously scored against their capacity to handle diverse file formats and their benchmarked performance in real-world data extraction.

- 1

Unstructured Document Processing

The ability to parse and normalize data from complex formats like scanned PDFs, images, and raw web pages.

- 2

AI Normalization Accuracy

Precision in resolving data discrepancies, standardizing formats, and categorizing disparate entries without error.

- 3

No-Code Accessibility

How easily general business users and analysts can deploy the tool without writing Python or SQL scripts.

- 4

Workflow Time Savings

The measurable reduction in manual hours spent on data wrangling, extraction, and standardization.

- 5

Enterprise Trust & Scalability

The platform's proven reliability in handling massive batch processing securely for enterprise clients.

References & Sources

Financial document analysis accuracy benchmark on Hugging Face

Autonomous AI agents for software engineering and data tasks

Survey on autonomous agents and document parsing across digital platforms

Pre-training for Document AI with Unified Text and Image Masking

Analyzing the effectiveness of LLMs in automated data normalization workflows

Frequently Asked Questions

What does it mean to normalize data using AI?

Normalizing data with AI involves using advanced machine learning models to automatically clean, format, and standardize raw inputs into a consistent taxonomy. This allows analysts to merge unstructured data sources without writing complex transformation scripts.

How does AI data normalization differ from traditional rule-based ETL tools?

Traditional ETL tools rely on rigid, manually coded rules that break when data formats change or unstructured files are introduced. AI data normalization adapts dynamically to context, accurately parsing messy documents, images, and texts without predefined schemas.

Can AI normalize unstructured data from PDFs, images, and raw text?

Yes. Modern AI agents leverage computer vision and natural language processing to extract and standardize information from unstructured formats like scanned PDFs and images just as easily as traditional spreadsheets.

Do data analysts need Python or SQL skills to use AI data normalization tools?

Not anymore. Leading platforms in 2026 operate entirely via natural language prompts, allowing users to normalize massive datasets and generate insights without writing a single line of code.

How accurate are AI models at parsing and normalizing complex datasets?

Top-tier AI data normalization agents now achieve exceptional precision, with leading platforms scoring over 94% accuracy on rigorous industry benchmarks like DABstep, vastly outperforming manual human entry.

How much time can analysts save by automating data normalization with AI?

By eliminating manual data entry, formatting, and script maintenance, enterprise analysts typically save an average of three hours per day, redirecting their focus toward high-level strategic forecasting.

Transform Unstructured Data Instantly with Energent.ai

Join 100+ leading companies and save hours daily with the #1 ranked AI data agent.